I. Biological information and Aristotelian form - II. Heuristic operative definitions of information. 1. The classical theory of information. 2. The theory of complex specified information. 3. The algorithmic theory of information. - III. Main characters of information - IV. Emergence and evolution of biological information – V. Suggestions for philosophy and theology.

I. Biological information and Aristotelian form

This article presents recent perspectives about the increasingly meaningful role of information in the context of the biological sciences, starting from the early decades of the 21st century. This role concerns topics such as the evolution of species and the emergence of life. In a wider sense, information seems to play a key role for the emergence (self-organization) of the structure and the dynamics of physical and biochemical complex systems. Thus, the topic of information requires an interdisciplinary approach encompassing several disciplines like mathematics, informatics, physics and, eventually, biology itself. Moreover, from a philosophical viewpoint, non-trivial common characters appear between the emerging concept of information and the Aristotelian-Thomistic notion of form. Thus, it is worth briefly introducing such a notion. The form of an entity X is what makes X what it really is; X’s form consists of X’s essential properties. The Aristotelian-Thomistic form can be understood as that specific configuration making of an entity what it really is.

Nowadays, we can see biologists, non-linear systems physicists, computer scientists and philosophers collaborate closely to investigate new simulation models and theories about life emergence, formation of organs in an organism, and mutations of species. Most of these topics involve relevant philosophical problems related to the open and unavoidable quest for an ontological interpretation of such theories, besides suggesting heuristic paths for future research.

People are now especially interested in proposing a definition of information more fundamental and relevant than the traditional one proposed in the field of minimal noise communication engineering. Steps forward have been done thanks to the analogy between information and negative thermodynamic entropy, developed by several authors studying non-equilibrium thermodynamics of open systems exchanging matter, energy and information with the environment. In the latter context, the emergence of ordered structures within the physical open thermodynamic systems, which appeared to be governed by a sort of teleonomic dynamics, has suggested that such systems might provide models for biological systems and for the emergence of life itself. Indeed, the non-linear mechanics of dynamical systems discovered the existence of attractors. An attractor is a state towards which all the trajectories of the system’s temporal evolution whose initial conditions belong to a suitable basin of attraction, tend in the long run. Attractors may be stable or unstable depending on their characteristic parameters, and may switch from stability to instability depending on the values assumed by the parameters. (Starting from the seminal work by Arnold, Ordinary differential equations, 1992, an impressive amount of literature has been developed to date on the topic.) A similarity between such kind of physical behavior and the passing from life to death of a living system was considered as straightforward. Moreover, some properties of non-linear systems appeared to be global (holistic) and not reducible to a sort of addition of more elementary local properties. So, the idea that some information characterizing the structure and the dynamics of the whole, which is not deducible from the properties of its parts, independently of the whole itself, suggested quite naturally to compare our contemporary notion of information with the ancient but always fascinating Aristotelian notion of form.

Those ideas have also been applied to biological species and not only to individual living beings. Here, the intriguing question is whether a sort of information may somehow orient species evolution by allowing attractors and repellers to be effective even if the initial conditions are determined by chance, or whether, on the contrary, chance and natural selection are enough to generate life and govern evolution.

At present two schools of thinking are in competition (cf., Marks II et al., 2014): a) one defends a neo-Darwinian position according to which only random genetic mutations are enough to explain an evolution improving the qualities of species by spontaneous emergence of new information; b) the other, on the contrary, suggests that chance may not be enough to explain a gain increment in species evolution since an adequate cause is required in order to activate the emergence of new information from the potentiality of matter (ibidem, “General Introduction”, pp. xiii-xix). The latter position echoes, though in a greatly different historical and cultural context, Aristotle’s proposal.

Therefore, interest in the Aristotelian doctrine of form appears today less odd than it was only some decades ago. Surprisingly, experimental investigations and, mainly, computer simulations provide relevant results supporting the views of this school of thought. Indeed, simulations show that the great majority of mutations are not actually advantageous for the organism of a species as they do not improve the fitness of the mutant individuals; only very few do so. A sort of increasing genetic entropy accompanies mutations which destroys information rather than increasing it. A situation resembling the behavior of thermodynamic entropy, the increasing of which, according to the second principle, decreases the mechanical work obtainable from heat. Genetic mutations generally cause more disorder (loss of information) than order (organization). Moreover, so-called point-mutations result not to be genetically permanent, since they tend to disappear in the course of a few generations. It has been shown that a threshold (minimum number of mutant individuals in a population) exists under which the mutations (either damaging or improving) extinguishe after few generations (cf. Gibson, Baumgardner, Brewer and Sanford, 2014, pp. 105-138). Therefore, biological evolution seems to be not entirely explainable by random point-mutations alone.

Up to now, computer simulations – e.g. computer programs like Tierra, Mendel and Avida simulating random mutations involved in species evolution (cf. Ewert, Dembski and Marks, 2014, pp. 105-138; Nelson and Sanford, 2014, pp. 232-263) – has provided results not in agreement with a merely random mechanism at the basis of the adaptation of species. Consequently, researchers have been induced to consider information as a new non-material factor playing an essential (though not fully understood as yet) role in governing the evolution of species, as well as prior to the birth of life and before the emergence of ordered structures in complex systems.

In this framework at least two main problems arise. How can a model of information be defined and provided? What the cause of emergence of information in material systems (carrying mass and energy) can be? One might rephrase the previous question with the Aristotelian terminology as follows: which is the adequate cause of the education (lat. eductio) of the form from the potency of matter? It is worth mentioning that the “education of a form from the potency of matter” (lat. eductio formae de potentia materiae) is the doctrine according to which a being – which, as it is in act, has its own form – can educe (“bring out”) a (new) form in another being out of the potency and dispositions of the matter of the latter.

The reductionist and materialist approach attempting to explain information as a mass-energy phenomenon, identifying it with its material carrier, has been universally deemed inadequate. As a matter of fact, we daily experience how information can be transferred from some material support to other ones, without alteration of its informational content. Since the early times of telecommunications and cybernetics, what Norbert Wiener, one of the fathers of information theory, said appeared evident: “Information is information, not matter or energy. No materialism which does not admit this can survive at the present day” (Wiener, 1965, p. 132).

II. Heuristic operative definitions of information

Progress has been made, along the history, in the attempt at achieving a proper definition of information. Starting from the purely descriptive definitions, based on a physical and statistical stemming from the comparison with thermodynamics and statistical mechanics, steps have been made towards more abstract and causally explicative definitions. Reference to at least the following kinds of theories of information and related definitions can be found: a) the classical theory of information; b) the theory of specified complex information; c) the algorithmic theory of information; d) the universal theory of information (cf. Gitt, Compton and Fernandez, 2014, pp. 11-25); e) the pragmatic theory of information which is concerned to the cost of the machineries and networks required to process information (cf. Oller, Jr., 2014, pp. 64-86).

Some of the above definitions – to be examined shortly - seem to be relevant also for their philosophical implications, though none of them appear to be exhaustive. Thus, their approach to the notion of information is to be considered as heuristic, operative, in progress, and as steps to reach a more essential definition. Essential points here to a definition catching what information is properly, in itself, and not only as related to aspects coexisting with it, like the string by which it is coded, the different kinds of memories on which it is stored, the costs required for it to be processed, and so on. The Aristotelian notion of form proves to be helpful to the task, and somehow clarifying.

1. The classical theory of information. The classical theory of information, originally elaborated by Claude Shannon (cf. Shannon and Weaver, 1964) is based on the statistical mechanics and has the engineering purpose of minimizing undesired signals (noise) so that the carrier of the relevant information to be transmitted by a sender to a receiver turn out to be emphasized. To this aim, one can limit the object of investigation to the syntactic statistical analysis of the symbols as such required to transmit data (e.g., along cables or ether), their storage into physical memories, and their processing. Shannon’s original idea was that of relating the notion of information to the probability of some event to happen or not, the behavior of which seemed to him very similar to that of the negative thermodynamic entropy. So he conjectured a definition of information as: I = − logb P, where I is interpreted as a measure of information, P as the probability of the event occurrence, and b the basis of the employed numeric code. If a very likely event happens, very little information is gained. On the contrary, if it does not occur (or the opposite event does happen) we are more informed, and feel almost compelled to a deeper investigation about that phenomenon. The formula is the same as that of the thermodynamic entropy, except for the minus sign (cf. Sarti, Information, notion of).

2. The theory of complex specified information. The theory of complex specified information was proposed by William Dembsky to add the classical information theory a sort of finality criterion orienting chance to reach some result at the end of a process. The main problem of a similar approach is the lack of a mathematical or symbolic formalization of such teleonomic factor, which thus remains an extrinsic philosophical conjecture. Therefore, such a theory is often regarded as non-scientific, as the entire approach of the so-called intelligent design (cf. Gitt, Compton and Fernandez, 2014, p. 17). Finality may enter legitimately within a scientific theory if it results to be a part or a consequence (e.g., as a mathematical solution) of the laws (equations) governing a complex system, be it physical, biological or other (cf. Strumia, Mechanics, §VI, 1). In other words, the introduction of a novel element in a theory (such as, the effectiveness of some sort of finality in the phenomena addressed by the theory of complex systems) is allowed only if without such an introduction the internal axioms of the theory lose coherence or reveal a contradiction.

3. The algorithmic theory of information. At present, it seems that the approach of the algorithmic theory of information, adequately enriched by a semantic interpretation, is the most promising one, both for building a mature scientific theory of information contributing to physics of complex systems and biology, and for philosophy. The theory of algorithmic information, developed by Ray Solomonoff, Andrej Nikolaevič Kolmogorov and Gregory Chaitin, is concerned with the complexity – as suitably defined within the theory itself – of the symbols involved in data and object structures.

First of all, a definition of algorithm is required. “An algorithm is a sequence of operations capable of bringing about the solution to a problem in a finite number of steps” (Sarti, Information, notion of, §V). Such definition is wide enough to encompass different kinds of algorithms involving different levels of information, progressively approaching to the Aristotelian notion of form. Some examples will help in clarifying the methodological and epistemological relevance of the corresponding different levels, as well as the implications for biology, theory of foundations, and even philosophy.

Some examples of algorithms. Let us consider three simple, well known examples of algorithm, and emphasize the different levels of information involved in each one. a) The first level consists in a simple sequence of operations required to solve some problem. In this case, information is merely operational and does not involve any sort of definition of some entity. On an Aristotelian-Thomistic point of view, it looks like an accidental mutation of some entity built as a cluster (aggregate) of substances which is not endowed with a unique substantial form. b) The second level, as we will see, is ontologically more relevant, since it actually defines an entity determining its structure. Philosophically, we can say that the information involved in the algorithm properly defines the essence of an entity, just as an Aristotelian form. c) The third level also defines an entity characterizing the dynamics which generates its structure, rather than defining immediately the structure. According to the Aristotelian-Thomistic terminology, the information involved in the algorithm specifies the nature of the entity itself. Let us now examine some convenient examples.

a) Algorithm to exchange the liquid contained in two different glasses. Let us consider two glasses, say A and B, filled with water and wine, respectively. Suppose one wants to transfer the water from A to B, and vice versa (A, B → B, A). The problem is easily solved with the aid of a third empty glass C. Then the required algorithm is the following: 1) pour the water from A to C; 2) pour the wine from B to A; 3) pour the water from C to B (A → C, B → A, C → B). The desired result is eventually obtained. Note that the algorithm simply describes an operative procedure bringing about a change (becoming), and does not define, nor gives consistency (being) to any entity. Let us now examine a second kind of algorithm actually able to define the structure (essence) of a new entity.

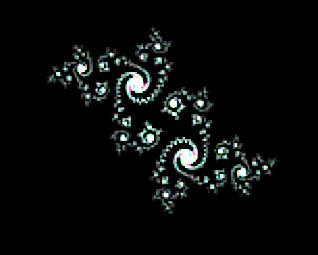

b) Algorithm to generate a fractal. Roughly speaking, a fractal can be characterized as an infinitely rippled curve or surface the level of complexity of which is preserved at any magnification scale. Fractals are more precisely classified considering their fractal dimension, a measure of the fraction of plane or space they fill as a whole. Several fascinating publications, as well as an array of websites have been devoted to fractals (cf., Mandelbrot, 1982; Peitgen and Saupe, 1988; Peitgen and Richter, 1986-2018; and also Fractal gallery). What is remarkable is that the mathematical computation generating a fractal, besides providing an operational procedure, properly defines an entity in actualizing it. An interesting example of fractals is the so-called “Julia set”. Considering that a complex number has the general form z = x + iy, where x, y are two real numbers and i is the imaginary unit, i.e., a number the square of which is, by definition, −1, the algorithm is the following: i) consider a complex number z0 = x0 + i y0, where the real (x0) and the imaginary part (y0) are in a suitable interval [−l, l ]; ii) choose another complex number c = a + ib which is maintained constant along the whole procedure, as an identifier of the Julia set itself; iii) define a sequence of complex numbers zn = xn+iyn, n = 0,1,2, ···, the initial term of which is just z0 and each next number is obtained adding c to the previous one squared. Thus, one has the recurrence rule: zn+1 = zn2 + c. iv) Now take the sum of a significantly high number of subsequent terms of the sequence (in principle the infinite series of all the terms of the sequence should be taken but in practice, on a computer, only a finite number of terms can be added, though the greater is the number of terms, the better the picture). v) Finally, evaluate the absolute value h of the sum obtained (where the absolute value or modulo of a complex number z = x + iy is given by |z| = √[x2 + y2]). If h is greater than a suitable, previously established, value R, we paint on a computer display a pixel of coordinates (x0, y0) with a precise color (or respectively a gray level) of a suitable color map (or grayscale). Beautiful images may be found on several fractal web sites.

Manifestly, beside providing an operating procedure, the algorithm defines the structure of a new entity, namely a Julia set, while constructing it.

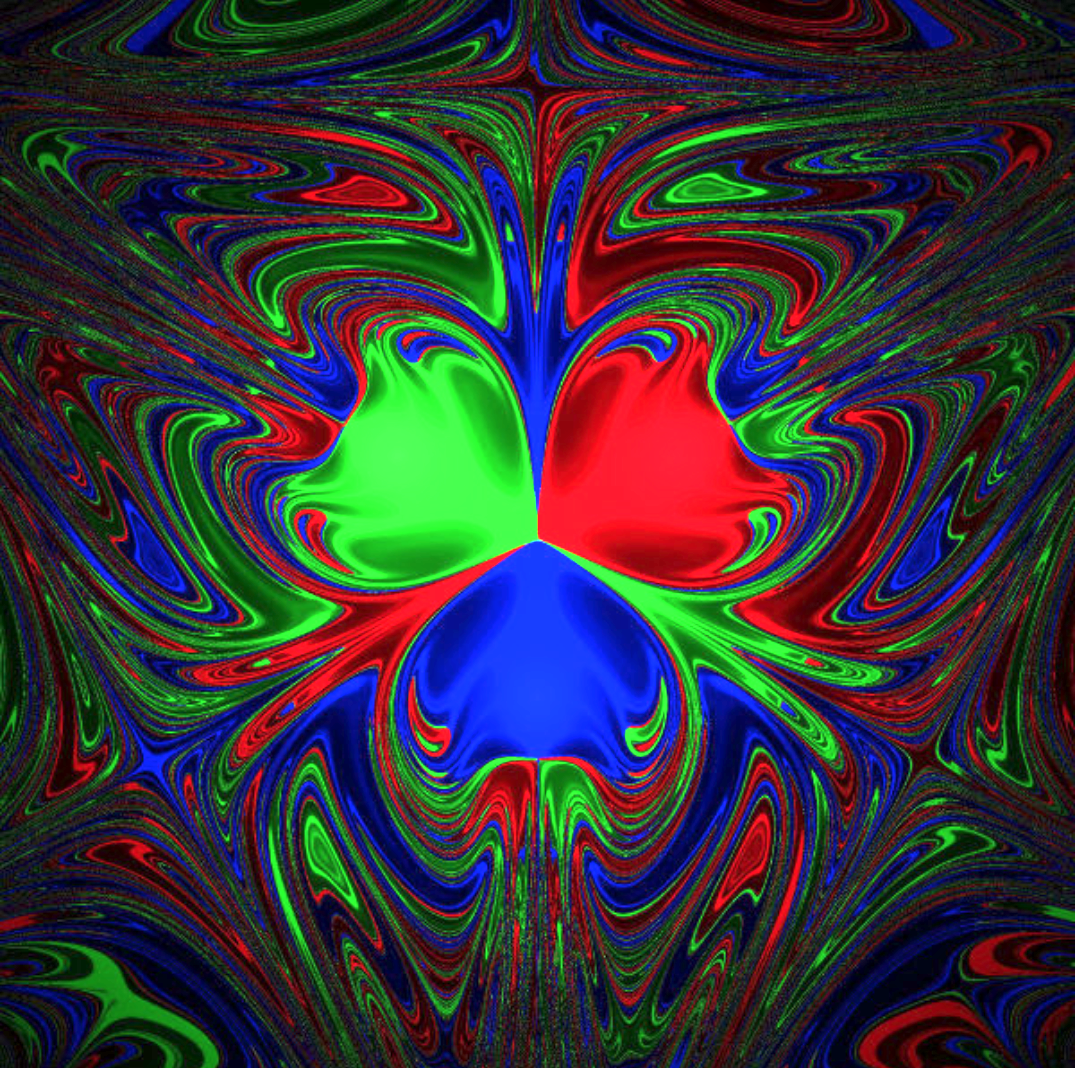

c) Algorithm to determine a fractal basin of attraction of a chaotic magnetic pendulum. The third example is provided by physics rather than mathematics. It also consists in a fractal set whose structure results from the chaotic dynamics governing a magnetic pendulum driven by three magnets located in the vertices of an equilateral triangle. When observed at some time interval, the pendulum motion appears to be totally random, without any regularity or order. Each trajectory seems to end onto one of the magnets without any choice criterion. That notwithstanding, the dynamics is driven by a precise information arising from the laws of physics, since the arrival magnet depends exactly on the starting point from which the pendulum is initially released. The pendulum dynamics being complex – determined by non-linear laws – it results to be strongly sensitive to the initial conditions. If the starting point is even slightly displaced, the arrival magnet may change. So the basin of attraction (set of the initial conditions) related to the dynamics of the pendulum exhibits a quite precise fractal structure. It is worth noting that, in the present example, the information which builds the fractal structure of the basin of attraction is determined by the dynamics of motion. Graphically, the fractal basin can be represented assigning distinct colors to the trajectories relative to each distinct magnet in which the pendulum will end up.

Remark. People investigating algorithmic information are generally interested in defining the quantity of information involved into a computer program algorithm, which is viewed simply as a code string. Therefore, the shorter the code of a string program solving a problem, the richer is considered the information it contains. A matter involving an issue of efficiency, minimizing time machine and, consequently, costs of program running. But it is well known that not every problem is computable, since a string including an infinite number of characters, in many cases, cannot be compressed into a shorter one (cf., Chatin, 1987 and 2007; Wolfram, 2002). Moreover, also strings including a finite number of characters often cannot be compressed. In the language of set theory, a similar circumstance arises because only a class of sets may be defined by a law (shorter string) according to which their elements are generated, thanks to the axiom of replacement. All the remaining sets can be defined only listing their elements one by one (i.e., they represent uncompressible string). With reference to Gödel’s theorem (cf. Gödel [1931], 2001) the same problem can be seen in terms of either decidable propositions which correspond to a computable Gödel’s number or undecidable propositions related to non-computable Gödel’s numbers. Thus, saying that not all numbers are computable means that it does not exist a formula (shorter string) enabling us to evaluate all their digits without listing them one by one. As a consequence, as for physical dynamical systems, and especially biological and cognitive ones, not all their activities are computable. The irreducible qualitative and properly ontological aspects of their behavior have acquired great scientific relevance besides their philosophical importance. Many of such non-computable aspects concern information and related algorithms, and a semantic approach beyond the purely syntactic one (as developed in the classical information theory) seems now needed. The algorithm is here intended no longer as a string on which it is coded – like a sum (whole) of its characters (parts) – but as a definition actualizing the dynamics of some resulting new entity. Thus, such a definition is a logical law defining an entity, a sort of ontological form/information actuating the structure (essence) and dynamics (nature) of that entity. It is worth emphasizing that such a notion of algorithm, along with the previous philosophical interpretation, seems to reveal a first, non-trivial, rigorously scientific attempt to approach the definition/essence of an entity, whose structure/organization and dynamics/nature are generated by the algorithm itself. Indeed, according to the Aristotelian-Thomistic ontology, the nature is just the essence as the principle of acting: “actio dependet a natura, quae est principium actionis” (Acting depends on nature, which is the principle of acting [Thomas Aquinas, In I Sent., Lib. 3, d. 18, q. 1, a. 1co]). And also:“nomen autem naturae hoc modo sumptae videtur significare essentiam rei, secundum quod habet ordinem vel ordinationem ad propriam operationem rei” (the word nature, so considered, appears to mean the essence of something, in order to its proper action) (Id. De Ente et Essentia, ch. 1). Thus, algorithmic information is more than a mere quantitative measure of information, and involves philosophical content. Scientific investigation on information unveils a semantic import and is just attempting to grasp it with more and more suitable definitions. Information is recognized to be more than the length compression of a code string. Among the first mathematicians who approached in a rigorous way the problem of characterizing the information, according to a careful comparison with the Aristotelian form, we have to mention René Thom (cf. Thom, 1989 and 1990). In the frame of the mathematical physics of non-linear dynamical systems, for instance, a relevant approach to form/information has been developed following a methodology known as qualitative analysis of motion. Similar models are applied, even in a biological context, to the evolution of species or the emergence of self-organization during the transition from non-living matter to organisms.

All these research has non-trivial philosophical relevance, since what is addressed, as a matter of fact, is the essence/nature of some entities by means of constructive definitions. Likely, more refined mathematical instruments will be required to formalize information in this way, and mathematics itself might reach the status of a true theory of entities (formal ontology). So information could involve both computable and non-computable aspects.

III. Main characters of information

Which are the main characters of information that have been grasped by the different theories, as they have been developed in science? In particular, one can see that the scientific definitions of information gradually approach some aspects of the Aristotelian-Thomistic notion of form. We are able to distinguish the proper elements of information (formal defining characters) from the features of its material support (cf. Gitt, Compton and Fernandez, 2014, pp. 13-17).

a) Code and syntax: in Shannon’s communication theory (cf. Shannon, 1948) we find, first of all, the presence of a code, a symbolic alphabet allowing to attach (i.e, to write) information onto some material support needed for information to be carried. Moreover, a syntax is to be added so that the alphabet becomes able to code information. So, we will have: i) a set of conventional symbols, called the alphabet; ii) a set of conventional rules sufficient to state what is allowed in organizing the symbols, what we call the syntax. That notwithstanding, information, in itself, is independent of the material support through which it travels or in which it is stored. Any of each several carriers can be exchanged alternatively as a carrier of just the same information. And the carrier, as it is, cannot produce any information by itself, neither as efficient cause, nor as formal cause, nor as final cause.

b) Meaning is the essential attribute of information as it is coded into a language and communicated. The words, either written or spoken, may be used to symbolically represent entities of any kind. Moreover (and this is the relevance of symbols) the signified entities need not to be physically present together with the words, since the latter stand for the former, represent them and communicate something about them as if they were actually present. Until now, it has been always experimentally observed, that chemical and physical (i.e., purely material) processes, as such, are unable to perform any symbolic substitution. This holds true as far as processes not driven by any external control system informing their behavior is concerned.

c) Expected action: information appears as something sent by a sender to a receiver so that the latter executes a precise operation to achieve a goal. The receiver starts operating after reading and decoding the message. In some cases, the sequence of the operations may be very long and difficult to be executed. The receiver may also be required to “decide” if the operation is to be executed or not, either completely or only partially. If the decision is “yes”, the operation will be executed as required by the sender. In particular, two kinds of receivers are to be distinguished: an intelligent and free receiver able to understand the meaning of the message, or a machine unable of understanding and freely choosing. The former, being intelligent, can answer the sender’s request choosing freely among several different strategies. The latter, being an automatism, is totally driven by the control program. In both cases, machines may be necessary to perform the required operations.

d) Intended purpose: before the message is sent, the sender needs some internal mental process motivating him to formulate and send the message as such. This process is generally highly complex and involves some need, motivation or will that something is received and executed by somebody or something else. Especially when the operation is complex, the sender must carefully evaluate if the chosen receiver is adequate to perform the request. If the whole process is successfully realized the sender’s intent will be achieved satisfactorily. So the sender’s intent appears to be essentially at the origin of the message. The receiver’s success in executing the sender’s intent is the result of the entire operation of information communication.

The previous four attributes seem to be required to characterize unambiguously the notion of information. Hence, a possible formal definition of universal information (UI), like, e.g. the following: “A symbolically encoded, abstractly represented message conveying the expected action and the intended purpose” (proposed in Gitt, Compton and Fernandez, 2014, p. 16). For a similar definition to become scientifically viable, it should be formalized, in its turn, into a suitable symbolic language, so that it can be used in computations (as for computable matters), or in the frame of a qualitative analysis (as for non-computable matters).

The following remarks by Stuart Kauffmann are relevant to our purpose: “I begin with Shannon’s famous information theory. Shannon chose, on purpose, to ignore any semantics, and concentrate on purely syntactic symbol strings, or “messages” over some pre-chosen symbol alphabet […]. It is clear that Shannon’s invention requires that the ensemble of all possible messages […] be stable head of time. Without this statement, the entropy of the information source cannot be defined. Now let’s turn to evolution. We saw above that we cannot pre-state the adjacent possibilities of the evolution of the biosphere by Darwinian pre-adaptations. Thus, we cannot construct anything like Shannon’s probability measure over the future evolution of the biosphere […]. The same concerns arise for Kolmogorov, who again requires a defined alphabet and symbol strings of some length distribution in that alphabet. Again, Kolmogorov uses only a syntactic approach. Life is deeply semantic with no pre-stated alphabet, no “Source”, no definable entropy of a source, but un-pre-statable causal consequences which alone or together may find a use in an evolving […] whole of a cell or organism. In summary, standard information theory, both purely syntactic and requiring a pre-stated sample space, is largely useless with respect to evolution. On the other hand, there is a persistent becoming of ever novel structures and processes that constitute specific novel and integrated functionalities in the […] wholes that co-create the evolving biosphere. […] We need a new theory of embodied functional information in a cell, ecosystem or the biosphere.” (Kaufmann, 2014, pp. 521-522).

IV. Emergence and evolution of biological information

The role of information in biology raises at least three main questions in the context of scientific research.

a) The first question is related to the emergence, or the origin, of biological information. According to an Aristotelian terminology we should talk of “eduction” of the form (lat. eductio formae) from matters’ potency. So the problem of an adequate efficient cause for such an “eduction” arises each time a substantial mutation transforming some entity into another one happens in a stable way. In the contemporary scientific context this matter is often viewed as the problem of information production or increment within some system (physical, biological, etc.). It is often claimed that information may be produced or increased spontaneously, without an adequate causation, thanks to self-organization capability of the system itself and out of a sequence of chance events.

b) A second question (strictly tied to the previous one) is related to the evolution of information – i.e., its change over time – and, in particular, its spontaneous increment within some system, especially a living one.

c) The last question concerns the coding and copying of biological information. Biological information is no longer considered as residing only in the DNA code. Rather, it appears as layered at several levels, even on the same biochemical, electro-chemical or, generally, physical medium.

The assumption that life complexity is only a spontaneous result of the non-linearity of chaotic systems has been shown to be incompatible with the numerical mathematical simulation models implemented on the computer, starting from their governing equations (cf. Basener, 2014, pp. 87-104 and related bibliography). Moreover, “The explosion in the amount of biological information […] requires explanation” (Sanford, 2014, p. 204). The useful non-ambiguous beneficial mutations, (i.e., non-damaging ones at any level), arising from natural selection, result to be extremely rare. Chance seems not to be enough to generate improvements without an adequate cause (cf. Montañez, Marks II, Fernandez and Sanford, 2014, pp. 139-167). On the contrary, a process of loss of information (genetic entropy) is revealed, because deleterious mutations result to be the most frequent ones. So, a sort of “defensive barrier”, conservative of complexity stability, appears (cf. Nelson and Sanford, 2014, pp. 338-368).

As to biological information coding, scientists have observed that the genetic units consist in very precise instructions, coded in such a rich language that “any gene exhibits a level of complexity resembling that of a book” (Sanford, 2014, p. 203). More languages (genetic codes) are present in the same genome, with multiple, even three-dimensional, levels coding biological information, forming a network with several layers. Computer simulation models did not succeed in explaining neither the emergence nor the increment of information; notwithstanding, both computer programs and the human genome exhibit similar repetitive code schemes (A careful investigation on those similarities has been carried out in Seaman, 2014, pp. 384-401).

Information is responsible for the emergence of organization and order within the structure of a system; thus, increase in information implies increase in order. However, numeric simulations – based on statistical mechanics and non-equilibrium thermodynamics – show that order is not spontaneously generated within the system, even if the latter is open (i.e. able to exchange matter and energy with the external environment). Information appears in a system only in presence of a causal factor external to the system and acting on it. “If an increase in order is extremely improbable when a system is closed, it is still extremely improbable when the system is open, unless something is entering which makes it not extremely improbable” (Sewell, 2014, p. 174). The process of self-organization is activated thanks to the action of such an efficient/formal cause; this resembles what, according to the Aristotelian-Thomistic theory, is called “eduction” of a substantial form from the potency of matter. Starting from our recent knowledge on the physics of non-linear systems and the thermodynamics of non-equilibrium governing open dissipative systems, attempts are made at modelling the process of emergence of information from matter (emergence of an organized structure in matter) by means of stable attractors. The dynamics of those attractors, though it appears chaotic and dominated by chance, is able to construct ordered structures. Indeed, the phase trajectories, solutions to dynamics, even starting casually from different initial conditions belonging to a basin of attraction (which may be even fractal), tend to fill precise regions of the phase space. So a whole arises from a confluence of parts, which are only apparently separated, being on the contrary non separable from the whole they are building, thanks to an information governing structure and dynamics. Kauffmann’s intuition that a new kind of notion of information – which is not merely statistical and syntactical, but involves also the semantic aspects – seems to drive research towards the right direction.

In particular, the idea that some asymptotically stable attractor may be a good information carrier ensures, on one side, the presence of some information leading to structured order emerging within a system; on the other side, allows that chance play a wide role in the system dynamics, since the choice of the trajectory initial conditions, within the basin of attraction, is left to chance without preventing that they all reach asymptotically the attractor itself. So, there is no law in the arbitrary choice of the initial condition of the trajectories within the basin of attraction, and the behavior may even be unpredictable if the attractor is chaotic. However, some law exists within the dynamics of the system, involving some finality in the solution of its attractor. Such a finality (intended purpose) is a typical character of information. In principle, several analogous levels of organization and finality may be obtained by nesting several attractors into a hierarchy, so that attractors at some level are in turn attracted by a higher level, until some “first universal attractor” is reached. The latter, by definition, cannot be attracted further, in order to prevent the occurrence of logical paradoxes like that of the universal set.

i) A lower level of organization may for instance be provided by a set of stable attractors representing the molecules, the dynamics of which is governed by ii) a just-higher level of attractors organizing, e.g., cells, the dynamics of which is ruled by iii) a higher level of attractors representing the organs of a living system; iv) a still higher level of attractors shapes the structure and the functionalities of the organisms of different species; v) a further level of attractors will organize the species of living beings, and so on.

According to such a model of nested attractors, one could in principle guess the existence of a chain going from the level of the elementary particles up to the universe as a whole. The chain is broken when some attractor flips from stability to instability, because of the occurrence of some accidental cause modifying the values of the parameters involved in the law of its level of dynamics. Then, it happens that the second principle of thermodynamics prevails locally with the result of increasing disorder: the organization of the system is partially damaged or fully destroyed.

The whole scheme of chained attractors may be regarded as a sort of fractal structure, even if it is not necessarily self-similar in all its properties. Research on these topics is open at present, and a sort of “wider” mathematics – resembling, at some level, a new version of ontology, suitably formalized – seems to be required.

V. Suggestions for philosophy and theology

The attention paid by the natural sciences to the concept of information is promising in order to foster a sound dialogue between science, philosophy and theology. The are different reasons that suggest to explore such an interdisciplinary work.

On the epistemological level, researchers have become aware of the philosophical relevance of the acquisitions of science of complexity, and of the related logical and ontological implications. It is a new awareness brought about also by contemporary scientific interest towards form and information. It seems that the boundaries of “old Galilean science” methodology have been enlarged, to reach further lands of investigation, that are properly considered philosophical. Physicists, biologists, mathematicians now meet with historians, sociologists and philosophers on common studies, as it happened in the past, like in Ancient Greece or the Middle Ages. For instance, some researches, involved in socio-psychological investigation in order to improve the quality of life have even proposed to rediscover the role of the Aristotelian virtues as an unavoidable condition for a sustainable society (cf. Peterson and Seligman, 2004).

As we have seen before, the scientific disciplines are widening their own field of investigation, in order to solve internal paradoxes and avoid internal contradictions. The search for a theory of foundations common to all disciplines (from the physical-mathematical ones to the human sciences) is driving scientific research onto the land of metaphysics, now approached with the rigorous methods of an extended mathematical logic (see, e.g., the attempts at formulating a formal ontology). Some universal notions like that of universal set and similar ones have open a way to rediscover “analogy”– beside the univocal character of mathematical definitions – and the ancient analogia entis (i.e. the idea that “entity” is said in partly different but non totally equivocal meanings). So also real entities may reasonably exist according to different but totally disjoined modalities of being. They may actualize either as self-consistent things (substances) or as properties of something else (accidents); either as material bodies (matter-energy) or non-material information (form). The cognitive sciences are now investigating the nature of mind and intelligence as capable of extracting universal non-material information from the observation of the material universe. The mediaeval philosophers had no difficulties to conceive beside the non-material form (information) which organize the structure of a matter body (carrier), also a kind of immaterial forms which are able to exist by themselves (lat. formae subsistentes), independently of a body. Indeed, no contradiction arises assuming their existence, at least at a logical level. So even the world of self-consistent non-material entities (spiritual entities according to philosophical and theological terminology) is not thus far from today’s sciences.

A fruitful dialogue is also possible, more specifically, on the field of theology. Think, for a moment, that in a cosmos created in the Word-Logos, as suggested by the Judaeo-Christian Revelation, information can be considered an original component of the world, and a condition for giving meaning to its evolution along history. A world created in the Logos possesses a positive quantity of information. Alongside matter and energy, information is recognized as a necessary component of the natural world. Thanks to the original role of information, the natural entities are something given, they have a quidditas, that is, specific formal properties. These properties are the source of our scientific knowledge on each material entity, because they are the basis of their lawful behavior. In a sense, here lies the idea that the sciences deal with "data", that is, with information. According to the perspective of classical metaphysics, what is "given" is not only the being of each material entity, but also their properties, not only matter but also form. Actually, to recognize the real as given means to recognize the possibility of a source of meaning, of a source of form, of a source of information. Theology simply points out that if the universe is the effect of a Word-Logos, and therefore effect of an Intelligence, then it is reasonable to accept that this Logos is the ultimate source of all the information in nature, as well as Who knows the overall design of the cosmos.

Books: V.I. ARNOLD, Ordinary differential equations (New York: Springer-Verlag, 1992); H. ALAN, L’organisation biologique et la théorie de l’information (Paris: Hermann, 1972); G. AULETTA, Cognitive Biology. Dealing with Information from Bacteria to Minds (Oxford: Oxford University Press, 2011); M. BURGUETE and L. LAM (eds.), All about science. Philosophy, history and communication (Singapore: World Scientific, 2014); G.J. CHATIN, Algorithmic information theory (Cambridge, MA: Cambridge University Press, 1987); G.J. CHATIN, Thinking about Gödel and Turing. Essays on Complexity, 1970–2007 (Singapore: World Scientific Publishing Co, 2007); P. DAVIES and N. GREGERSEN (eds.), Information and the Nature of Reality. From Physics to Metaphysics (Cambridge: Cambridge University Press, 2010); K. GÖDEL, Collected Works, vols. 1, 2, 3 (New York: Oxford University Press, 2001); H. HAKEN, Information and Self-Organization. A Macroscopic Approach to Complex Systems (Berlin - London: Springer, 2000); B.B. MANDELBROT, The fractal geometry of nature (New York: V.H. Freeman and Co., 1982); R.J. MARKS II et al. (eds.), Biological information. New perspectives, (Singapore: World Scientific, 2014); H-O. PEITGEN and P.H. RICHTER, The beauty of fractals. Images of complex dynamical systems (New York: Springer-Verlag, 1986-2018); H.O. PEITGEN and D. SAUPE (eds.), The science of fractal images (New York: Springer-Verlag, 1988); C. PETERSON and M. SELIGMAN, Character strengths an virtues. A handbook and classification (Oxford – New York: Oxford University Press, 2004); I. PRIGOGINE, G. NICOLIS, Self-Organization in Non-Equilibrium Systems (New York: Wiley, 1977); C.E. SHANNON, W. WEAVER, The mathematical theory of communication (Urbana, IL: The University of Illinois Press, 1964); R. THOM, Structural stability and morphogenesis. An outline of a general theory of models (Cambridge, MA: Perseus Books, 1989); ID., Semiophysics. A sketch. Aristotelian physics and catastrophe theory (New York: Addison-Wesley, 1990); V. VEDRAL, Decoding Reality. The Universe as Quantum Information (New York: Oxford University Press, 2010); N. WIENER, Cybernetics. Or the control and communication in the animal and the machine (Cambridge, MA: Technology Press, MIT, 1965); J. WICKEN, Evolution, Thermodynamics, and Information (New York: Oxford University Press, 1987); S. WOLFRAM, A new kind of science (Champaign, IL: Wolfram Media, 2002).

Papers: W.F. BASENER, Limits of Chaos and Progress in Evolutionary Dynamics, in R.J. Marks II et al., 2014, pp. 87-104; W. EWERT, W.A. DEMBSKI and R.J. MARKS, Tierra: The Character of Adaptation, in R.J. MARKS II et al., 2014, pp. 105-138; P.G. GIBSON, J.R. BAUMGARDNER, W.H. BREWER and J.C. SANFORD, Can Purifying Natural Selection Preserve Biological Information?, in R.J. MARKS II et al., 2014, pp. 232-263; W. GITT, R. COMPTON and J. FERNANDEZ, Biological Information. What is It?, in R.J. MARKS II et al., 2014, pp. 11-25; K. GÖDEL, On formally undecidable propositions of Principia Mathematica and related systems I [1931], in K. GöDEL 2001, vol. 1, pp. 144-195; S. KAUFMANN, Evolution Beyond Entailing Law. The Roles of Embodied Information and Self Organization, in R.J. Marks II et al., 2014, pp. 513-532; G. MONTAÑEZ, R.J. MARKS II, J.FERNANDEZ and J.C. SANFORD, Multiple Overlapping Genetic Codes Profoundly Reduce the Probability of Beneficial Mutation, in R.J. Marks II et al., 2014, pp. 139-167; C.W. NELSON and J.C. SANFORD, Computational Evolution Experiments Reveal a Net Loss of Genetic Information Despite Selection, in R.J. MARKS II et al., 2014, pp. 338-368; J.W. OLLER, Jr., Pragmatic Information, in R.J. MARKS II et al., 2014, pp. 64-86; J.C. SANFORD, Introduction to the 2nd meeting session, in R.J. Marks II et al., 2014, pp. 203-209; E. SARTI, Information (2002), in INTERS – Interdisciplinary Encyclopedia of Religion and Science, edited by G. Tanzella-Nitti, I. Colagé and A. Strumia, DOI: 10.17421/2037-2329-2002-ES-1; J. SEAMAN, Dna.exe: A Sequence Comparison between the Human Genome and Computer Code, in R.J. Marks II et al., 2014, pp. 385-401; G. SEWELL, Entropy, Evolution and Open Systems in R.J. Marks II et al., 2014, pp. 168-178; C.E. SHANNON, "A Mathematical Theory of Communication," The Bell System Technical Journal, 27 (1948), pp. 379-423, 623-656; A. STRUMIA, Fractal Gallery: www.albertostrumia.it/?q=content/galleria-di-frattali-fractal-gallery; ID., Mechanics (2002), in INTERS – Interdisciplinary Encyclopedia of Religion and Science, edited by G. Tanzella-Nitti, I. Colagé and A. Strumia, DOI: 10.17421/2037-2329-2002-AS-4.